- Frazis Capital Partners

- Posts

- Living computers

Living computers

An interview with Dr Hon Weng Chong

I had a chat with Cortical Labs founder Dr Hon Wen Chong about his latest breakthroughs in biological computing.

A student on the other side of the world trained one of their computing units to play Doom. The story went viral, was picked up by Elon Musk, and it raised all sorts of scientific and ethical questions. It’s one thing to speculate about novel systems of intelligence and neuroscience, quite another to engineer it.

And the engineering here is astonishing. He and his team mastered the biological challenges of growing networks of neurons and keeping them alive, the hardcore computer science of reading and writing electrical signals to live systems in a novel way, and finally how to put the systems on the cloud, accessible around the world.

We covered octopus intelligence, reward functions for live intelligence systems and how they differ from large language models, what he’s learned about neuroscience and the brain through the process, and the commercial model of his company. We also touched on the ethics of building these systems, and the scale at which such a system might become conscious (rest assured, headlines aside neurons did not suddenly wake up in Doom and have to fight for their lives against pixelated monsters). But then again, you never know!

Full transcript below.

But while on the topic, one of the ironies of AI is that pure-play companies building the tech aren’t necessarily going to survive either, as the capabilities of free open-weight models are only ever a few months behind. And we will soon reach the point where most tasks that can be replaced by LLMs are ‘solved’ and don’t require state-of-the-art models.

And if , I don’t think anyone will shed a tear if Anthropic and OpenAI end up disrupted themselves, given the glee with which they announce timelines for when the think most of us will lose our jobs.

It’s simply too easy to switch models, as simple as typing ‘claude’ or ‘codex’ or one of the free Chinese models like ‘kimi’ to start a session. And the harnesses that made Claude Code such a breakthrough are also easily replicable.

You don’t have to use Chinese models either, US open-weight models are catching up. Arcee built their Trinity models which match Opus-4.6 in some domains with a team of just 30 and US$20 million. The cat is out of the bag.

For a taste of what that might look like in practice, someone at Shopify used Alibaba’s Qwen model and clever engineering to reduce their AI bill $5.5 million to $0.07m.

The same way that SAAS bills that run in the millions are prime candidates for internal replacement, as tokens become more meaningful expenses, I suspect more and more companies will switch from state-of-the-art models to free competitors. Something to watch.

Transcript

Michael Frazis

You had a pretty wild viral moment last week with your biological computers playing Doom.

Dr. Hon Weng Chong

Yeah, you could say that. What’s interesting is the Doom story was already about two weeks old. It had an initial spike in attention, and that was when Elon tweeted about it.

Then on Sunday, it suddenly caught a second wave of virality, and that one was on another level. It reached millions of views across multiple platforms.

The slightly painful part is that I now have crypto bros messaging me asking, “Hey, are you going to claim your Solana?”

And I’m like, guys, I run a real company. I cannot just go around collecting tokens.

Michael Frazis

That’s the thing. Every time there is a real breakthrough, it seems to attract a wave of crypto noise alongside it.

Dr. Hon Weng Chong

Exactly. And the irony is that I actually like crypto. There are some genuinely interesting ideas there.

What I have been trying to tell people is to stop focusing on things like creator fees. Instead, set up a DAO. Pool capital. Vote collectively on which projects to fund.

Otherwise, you are just handing money to individuals, and that is how people get rug pulled.

You need structure, governance, and a clear framework for how funds are allocated.

And honestly, this could change the entire funding model for research.

Right now, as a scientist, you spend months writing grant applications, and there is a high chance they get rejected anyway.

Why not go directly to a decentralised community, pitch your idea, and let them decide whether to fund it?

That is a completely different paradigm.

Michael Frazis

Yeah, and the crypto community seems to invest and spend money in a very different way. The money came very fast for some of them, and in huge quantities. There are hundreds of billions of dollars sitting on the balance sheets of, frankly, quite unusual people.

Dr. Hon Weng Chong

And I mean, it is incredible as well.

I think it was about a year and a half or two years ago that Tether, the Tether Foundation, put 200 million dollars into BlackRock Neurotech. That is one of the oldest BCI companies, and probably not the most exciting one, but they must have seen something in it.

So why don’t I explain the device.

Michael Frazis

How does the actual computer work? What is going on?

Dr. Hon Weng Chong

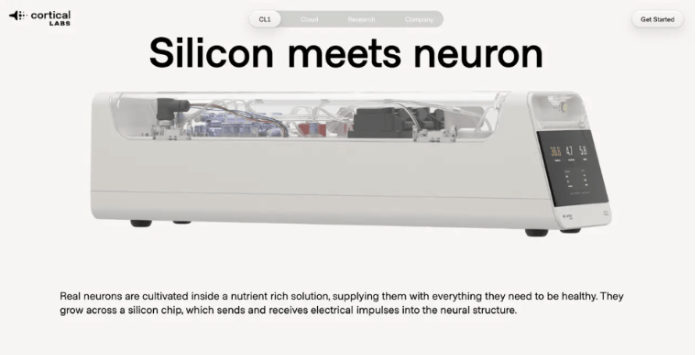

So, what we have here is the CL1. It is the basic compute unit of the cortical cloud.

Let’s go one level deeper. Think of it like Matryoshka dolls, where each layer sits inside another. The smallest component is this.

I will show you. It is a little bit cracked, but that is fine.

Michael Frazis

It does not have any live neurons in it, does it?

Dr. Hon Weng Chong

No, but we will see that later in the lab.

This is a glass chip called a microelectrode array.

It has 64 electrodes in the centre, which radiate out to the external connections.

We grow human stem cell derived neurons onto these chips. The neurons mature over time and, within about two to three weeks, begin to show electrical activity. They are naturally electrically active and are sitting on conductive material that connects to the external interface.

Using our device, we can read that activity. We can also deliver electrical stimulation back into the system. In that sense, we have read and write access to these biological neurons.

That is the smallest basic compute unit.

Michael Frazis

Let’s go one level deeper; where are the neurons, how many are there, and are they self-organising? Explain how it works.

Dr. Hon Weng Chong

As you can see, it is structured like a Petri dish. The design includes a well that holds the cell culture media, which keeps the cells alive. This allows them to absorb nutrients like glucose, carry out respiration, and manage waste.

At the base of the dish, if you look closely, you will see tiny electrodes that terminate at the centre. These extend outward in lines, connecting the internal activity to the external system.

We can grow anywhere from around 200,000 neurons, which is typical, up to 800,000 or even millions on an organoid. The scale is actually quite large, and we only interact with a small portion of it.

If you look closely, you will see a small cluster. That is where we place what we call a microfluidic PDMS structure.

Dr. Hon Weng Chong

If you look very closely, you can see these very small wells where we can precisely place neurons. They are connected by tiny microfluidic channels, which allow us to control how the network grows.

In earlier work, we largely let the cells grow freely and tried to shape their behaviour using input signals. What we found, however, is that when you introduce more structure, such as removing or adding channels, you gain significantly more control over the network. That also improves the computational output.

This is still an active area of research for us internally. We have not yet rolled it out to our cloud users, but it is something that is likely in the pipeline.

Michael Frazis

If you have 800,000 neurons, is there a way to think about that in terms of parameters, like you would in a large language model?

It is not just the number of neurons, it is also the connections between them. Presumably there are many more connections than neurons.

Dr. Hon Weng Chong

Not exactly. It is an interesting comparison, because in large language models, performance tends to improve with scale.

Biology does not really work in the same way. A chihuahua is not dramatically less intelligent than a Great Dane, even though the skull size is much smaller. They likely have similar numbers of neurons and synaptic connections.

What matters more is how information is processed and embodied. That is what determines how effective an organism is at processing information.

There are many organisms with larger brains and more neurons than humans, yet we are still more capable in most domains.

Take an elephant, for example. They are highly intelligent, but humans are still more capable across a wide range of tasks.

There is also strong evolutionary pressure at play. Brains are extremely energy intensive, so efficiency matters.

Michael Frazis

So why do you think that is the case? If there is so much pressure to keep brains efficient, why would an animal like an elephant have such a large brain? Is it because its nervous system needs to support such a large body?

Michael Frazis

Brains are very energy intensive. So why do you think that is the case? If there is so much pressure to keep them efficient, why would an animal like an elephant have such a large brain? Is it simply because their nervous system has to support a much larger body, or is there something else going on?

Dr. Hon Weng Chong

That is probably part of it. Larger animals tend to have larger brains, so you see the same pattern with whales.

But the key difference is that the evolutionary pressures they faced did not require the same level of connectivity and complexity that human brains developed.

If you think about it, humans are actually quite weak compared to other animals. We are not particularly fast, strong, or physically resilient.

The reason we have been successful as a species is our brain. We rely on social coordination, forming groups to hunt and survive. We developed clothing to adapt to different environments, and tools to extend our capabilities.

That ability to augment ourselves is what has driven our success.

Michael Frazis

I guess it is really the size and organisation of the network. A large, coordinated system can support advanced technologies in a way that even the smartest small group could not.

Dr. Hon Weng Chong

Exactly. It is about organisation.

Bringing it back to the chip, we typically grow around 200,000 neurons now, which is actually less than what we started with. In our initial paper, we used closer to 800,000 neurons.

At that stage, we did not know if it would work. With limited funding and time, you try everything and hope something works.

So, we started with many neurons and looked for any measurable effect. Once we confirmed that it worked, we shifted our approach.

Instead of scaling up, we started exploring how small we could go while still maintaining functionality.

Dr. Hon Weng Chong

There are two reasons for that.

First, when you grow smaller systems, you reduce complexity. That allows you to better understand what is actually happening at a more fundamental level.

Second, from a business perspective, smaller systems are far more replicable. They are cheaper to produce and easier to scale.

So it becomes a balance between performance and cost.

Michael Frazis

Going back to the Pong example, because that is the last time we spoke about this in detail.

You had a sheet of neurons. You were shining light on it, if I remember correctly.

Dr. Hon Weng Chong

No, it was the same technology. Electrical.

Michael Frazis

Electrical, right. And they were self organising and producing an output that you could read. How did you go from that to actually teaching them to play Pong?

Dr. Hon Weng Chong:

In the Pong experiment, we encoded the X and Y position of the ball using a combination of rate and place encoding.

We essentially assigned regions of neurons to represent different spatial positions. For example, neurons in one region corresponded to positions along the Y axis.

We then encoded the distance between the paddle and the ball as a frequency signal. The closer the ball, the higher the frequency. The further away, the lower the frequency.

So the setup was relatively simple in how we structured the input.

Doom is actually more interesting. To be completely transparent, we did not run the Doom experiment ourselves.

It was developed by a computer science student named Sean Cole from the University of Sussex, using our system and tools.

That was incredibly validating, because it confirmed our original thesis. If you build the tools, people will come.

Even investors had questioned whether that would happen.

Michael Frazis

So how do you go from that Pong setup to something like Doom? Presumably scaling across multiple systems.

Dr. Hon Weng Chong

You have to go back to the beginning.

We started in 2019 with funding to test whether neurons could actually perform useful computation. We demonstrated that with the Pong paper in 2022.

After that, we received a large number of inbound questions. People kept asking when we would move to something like Doom.

At the same time, academic researchers were asking practical questions. Which machines should we buy? What software should we use? What cells should we work with?

When you hear those questions repeatedly, you start to realise there may be a market.

One of the reasons this field has not scaled is that it operates like a cottage industry. Each lab builds its own hardware and software.

That is inefficient. You do not get economies of scale, and progress is slower because everyone is reinventing the same tools.

A lab might raise five million dollars in funding, but spend a significant portion of that building infrastructure instead of doing actual research.

Multiply that across multiple labs, and you end up with a large amount of duplicated effort.

So our approach was to take ownership of building the hardware, software, and biological protocols, package it into a platform, and provide it to researchers.

That way, they can focus on discovery instead of rebuilding the same systems repeatedly.

Michael Frazis

And in terms of getting useful computation out of these neurons, how does that actually work? Is there a reward function involved?

Dr. Hon Weng Chong

Yes, there is a reward function. There are multiple approaches, but I personally prefer reinforcement learning, coming from a machine learning background.

Some researchers also explore reservoir computing, which is more unsupervised and based on clustering. It can work, but I am less inclined toward that approach.

The key idea is to let neurons do what they naturally do, which is reduce entropy.

You provide them with a structured environment, such as a game like Pong, where there are clear rules. The ball bounces off walls, the paddle keeps the game going, and missing the ball ends the game.

Those rules introduce structure into the information.

The neurons then adapt to that structure. You guide them by providing reward or punishment signals, nudging them toward better performance.

Michael Frazis

What do those reward and punishment signals actually look like?

Dr. Hon Weng Chong

We do not use something like glucose or physical stimulation. Instead, we vary the predictability of the input signals.

By adjusting the entropy of the information we send, we can push the system in one direction or another.

Neurons naturally seek to self organise. The more structured and predictable the environment, the more they adapt to it over time.

This idea is influenced by theoretical work from Karl Friston at UCL, which explores how biological systems minimise uncertainty.

Dr. Hon Weng Chong

This relates to what is known as the free energy principle.

At a high level, it suggests that if you have a system such as a brain, a network of neurons, or any biological organism, it maintains its own internal model of the world. The external world also has its own structure and distribution of information.

The goal of the system is to minimise the difference between its internal predictions and what it actually experiences. In other words, it is constantly trying to reduce surprise.

If that is the underlying driver, then by changing the environment and making it more or less predictable, you can influence how the neurons behave. You can effectively guide them toward one outcome or another.

Michael Frazis

It is interesting that such a simple principle seems to explain what is happening in these networks.

They are essentially just trying to make sense of the world around them, and that alone acts as a form of reward.

Dr. Hon Weng Chong

Exactly. It is fundamentally a survival mechanism.

The better an organism can predict its environment, the better it can respond to threats and opportunities.

If you can anticipate what is coming next, you are far more likely to survive and adapt.

For example, if you are a rabbit and you can predict that an eagle is about to attack, you can react faster and escape. That increases your chances of survival and passing on your genes.

Conversely, if you are an eagle and you can better predict where the rabbit is going to move, you are more likely to catch it. That means you survive, eat, and reproduce.

So, information prediction is fundamentally a survival mechanism.

Michael Frazis

In that case, the eagle is effectively anticipating what the rabbit is going to do.

Dr. Hon Weng Chong

Exactly. And in many ways, we are doing the same thing in our own minds.

Take optical illusions as an example. A simple one is to close one eye and look straight ahead. You can see your nose. Switch eyes, and you still see your nose. But when you open both eyes, your brain essentially removes it from your perception.

That is because the brain is not just passively processing information. It is actively constructing a representation of the world.

That is why people like Yann LeCun talk about world models. That is exactly what we are doing in our heads.

We are building internal models, constructing a three dimensional understanding of space, and making sense of the signals reaching our eyes.

Michael Frazis

It is fascinating. And then you take that science and turn it into a product, where you are putting inputs in and getting outputs back.

Dr. Hon Weng Chong

Yes, but there is still a lot we do not fully understand about how these systems work.

We are only at the beginning of exploring this in depth. Michael Frazis:

Do you think that principle alone is enough to explain most of what is going on, or is there more to it?

Dr. Hon Weng Chong

I think there is a lot more happening.

Like in most areas of science, what we rely on are abstractions. We use the best framework available at the time to explain the phenomena we observe.

The free energy principle is useful, but it is not the end point. There are likely many other ways of explaining what is going on.

It also has its limitations. One example is the dark room problem.

If these systems are trying to minimise surprise, then hypothetically, if you place a person, a brain, or a network of neurons in a completely dark and predictable environment, why would it ever leave?

In that situation, there is no surprise at all. Everything is fully predictable.

Yet we know that organisms do not stay in that state. They actively leave, even though doing so increases uncertainty.

So clearly, the principle does not explain everything.

This is a field that is still wide open for experimentation and new theories that better capture what we are observing.

Michael Frazis

It feels like there is a big difference between science and engineering in what you are doing.

You do not necessarily need to fully understand everything, but you need to understand it well enough to manipulate the system and get it to behave in a certain way.

Dr. Hon Weng Chong:

Exactly.

Michael Frazis

What are the main things you have learned about networks of neurons through this process of turning them into a computer that can be accessed?

Dr. Hon Weng Chong

Internally, we have learned a number of things, and there is a lot of deep science behind it.

One of the most interesting concepts we encountered is criticality.

There is a similar concept in physics. For example, water has critical transition points, such as between liquid and solid at zero degrees Celsius, and between liquid and gas at one hundred degrees.

At these points, the system is highly sensitive. Small changes can shift it between states.

Neurons appear to operate in a similar regime, often referred to as neurocriticality.

In healthy systems, neurons tend to sit at a critical point defined by what we call a branching ratio of one.

There are multiple ways to measure criticality, but branching ratio is one of the simplest to understand.

A branching ratio of one means that a signal from one neuron propagates cleanly through the network, passing from one neuron to the next without dying out or exploding uncontrollably.

If the ratio is less than one, for example 0.8, the signal tends to fade out. The network becomes underactive and information does not fully propagate.

If the ratio is greater than one, the signal amplifies as it spreads. Over time, this can activate the entire network and lead to runaway activity, similar to what we see in seizures.

What we found in our experiments was particularly interesting.

When neurons were not placed in a structured environment, such as a game, they tended to drift away from this critical state. Their branching ratio would move either below or above one.

However, when we placed them into a structured environment like Pong, where they were interacting with a defined set of rules and feedback, they moved back toward that critical point.

Michael Frazis

So, once they are in the game environment, their criticality metrics actually change?

Dr. Hon Weng Chong:

Exactly. Their branching ratio and overall network dynamics shift back toward that optimal state.

Michael Frazis

And that is based on their self organisation? Are they physically moving as well?

Dr. Hon Weng Chong

Yes, they can physically move.

The neurons that are not constrained within the wells will actually shift and reorganise themselves. That can be quite challenging, because you then have to constantly monitor and adjust for changes in position.

It effectively introduces a second dynamic system on top of the network behaviour, which adds another layer of complexity.

But going back to criticality, seeing this behaviour led us to believe that it may be fundamental to information processing.

If a system is outside that critical zone, it does not process information efficiently. That could reduce its ability to adapt and survive.

So internally, we now use criticality as a key metric for assessing how well a network is functioning and processing information.

Another interesting observation is the amount of data required to train these systems.

Compared to traditional reinforcement learning systems running on GPUs, biological systems require dramatically less data. In some cases, up to 5,000 times less.

Michael Frazis

So, they are learning much faster than traditional systems?

Dr. Hon Weng Chong

Exactly.

We saw this in a study where we compared biological neurons with standard reinforcement learning algorithms.

We used the same Pong environment and tested three algorithms, including DQN, which underpins systems like AlphaGo, as well as more recent approaches such as A2C and PPO.

To make the comparison fair, we constrained all systems to the same limited dataset. The game ran at one hundred frames per second, and we only allowed twenty minutes of gameplay, which amounts to roughly twelve hundred frames of data.

The reinforcement learning models were unable to learn effectively from that amount of data.

Michael Frazis

Twelve hundred frames is not enough.

Dr. Hon Weng Chong

Exactly. But the biological system was still able to learn and adapt.

Michael Frazis

So, you are converting the game into electrical signals, feeding that into the neurons, and they self organise to minimise entropy?

Dr. Hon Weng Chong

Yes, that is correct.

Michael Frazis

And what happens next? How do they actually start playing the game?

Dr. Hon Weng Chong

You simply let them play.

The key difference is that there is no separation between training and inference. It all happens in the same loop.

Michael Frazis

Explain that a bit more.

Dr. Hon Weng Chong

In traditional machine learning, you train a model over many iterations, adjust the weights using backpropagation, and then deploy it for inference.

Biological systems do not work that way.

If you and I were learning a game, we would improve while playing. The learning process and the execution happen at the same time.

That is exactly what happens here. The system continuously updates itself as it interacts with the environment.

There is no distinct training phase and no separate inference phase.

This makes it an online learning system, which is particularly powerful for dynamic environments that are constantly changing.

Michael Frazis:

When you think about the billions of dollars being spent on training large models today, it does highlight how inefficient those systems are compared to biological ones.

Dr. Hon Weng Chong

Exactly.

Large models require continuous retraining, and their knowledge is effectively frozen at a specific point in time.

They do not learn from repeated use in the same way biological systems do.

Dr. Hon Weng Chong

Unless you store the information separately. Exactly. That is essentially what we are doing with approaches like retrieval systems, where models pull in external information and process it.

But internally, their weights do not change. If you gave a language model completely new information, like a newly discovered species on another planet, it would not be able to meaningfully incorporate that knowledge. It simply cannot update its internal structure in real time.

Michael Frazis

Is there a way of training language models that would be more aligned with how our brains work? Potentially more efficient as well?

Dr. Hon Weng Chong

Possibly.

That said, language models are extremely powerful tools. While they require significant time, data, and energy to train, no human has access to the same volume of information.

They can identify high dimensional patterns and generate solutions in ways that are difficult for humans to replicate. For example, when you ask for code, they can produce highly efficient solutions by drawing on vast repositories of knowledge.

Our brains are not optimised for that kind of task.

So, I think language models are incredibly valuable. The real question is how we use them.

In many ways, they represent the next evolution of search. From a business perspective, what makes them so disruptive is that almost anyone can now build something functionally similar to a search engine.

You can run a model locally and ask it questions in natural language, receiving structured answers. Previously, only a few companies had the resources to index and search the internet at scale.

Michael Frazis

Do you think it would be possible to recreate what is happening in the brain in a computer? If you mapped every neuron and connection, could you simulate it?

Dr. Hon Weng Chong

Yes and no.

You are probably referring to work like the fruit fly connectome. Mapping connections is one thing. Actually simulating how signals move through that network is much more complex.

It is like mapping roads between cities versus modelling how cars travel across them. You need to understand the dynamics, not just the structure.

Neurons are governed by complex equations, and each one behaves slightly differently. That makes large scale simulation extremely difficult.

The real question becomes what level of abstraction is sufficient.

Michael Frazis

What do you think actually matters?

Dr. Hon Weng Chong

There are several factors.

There is a spatial component, where neurons physically move and reorganise. That could be analogous to adjusting weights in a model.

There is also the structure of the network, or the connectome, which defines how neurons are linked.

Then there are gating mechanisms, which determine whether a neuron fires or not.

In machine learning, we apply the same activation function across the entire network. But in biological systems, each neuron can behave differently.

If every neuron has a different activation function, you lose the ability to efficiently compute everything in a single operation. The system becomes far more complex.

We also do not have just one type of neuron. Even in our systems, we work with a mix of different cell types.

Michael Frazis

Are different types of cells involved?

Dr. Hon Weng Chong

Yes.

Cortical neurons are primarily responsible for processing. You can think of them as similar to a CPU.

Hippocampal neurons are associated with memory and spatial reasoning. They help with tasks like navigation and understanding position.

Then you have glial cells, which support the neurons. They manage waste, provide nutrients, and help maintain overall system health.

We used to think glial cells were not very important, but it turns out they play a critical role.

Michael Frazis

Are they immune cells, or is that too simplistic?

Dr. Hon Weng Chong

Some of them do have immune-like functions, but more broadly, they act as the support system that keeps everything running properly.

One of the really interesting things about neurons is that they generally do not regrow.

Most of the neurons you have stay with you for your entire life. This is very different from other cells in the body, like skin or cheek cells, which are constantly replaced.

There is some evidence of limited regeneration in certain parts of the brain, such as deep within the hippocampus, but broadly speaking, neurons are largely permanent.

That is why events like strokes can be so damaging. When neurons are lost, they are not easily replaced.

There is some promising work in regenerative medicine aimed at addressing this, including efforts to treat conditions like Alzheimer’s, but under normal biological conditions, neurons do not readily regrow.

On the other hand, glial cells do regenerate.

In fact, many brain cancers originate from glial cells rather than neurons. It is very rare for neurons themselves to become cancerous because they do not divide. They are also relatively stable at a genetic level.

More broadly, the more frequently a cell replicates, the higher the chance of genetic instability. That increases the likelihood of mutations and, in some cases, cancer.

That is why many cancers arise in tissues where cells are constantly dividing, such as the colon.

Some cancers, like certain types of breast cancer, are also influenced by hormonal factors.

Dr. Hon Weng Chong

Cancers like skin cancer and bowel cancer tend to occur in tissues where cells turn over quickly. But putting that aside, glial cells play an important role in keeping neurons alive and functioning properly.

When you combine different cell types, what we see at Cortical Labs is that you get better learning performance compared to using a single cell type on its own.

They also tend to live longer.

Michael Frazis

Is it difficult to keep these systems alive and stable?

Dr. Hon Weng Chong

They do live longer, and we also see improved spiking activity.

Michael Frazis

What do you mean by better spiking activity?

Dr. Hon Weng Chong

Electrical activity. We observe sharper signals, more consistent firing, and generally more active cells.

That is a key indicator of a healthier and more functional network.

This reflects the broader nature of Cortical Labs. We are still a deep tech, research driven company with many open scientific questions.

The difference now is that we also have a commercial focus. A lot of our research is directed toward improving the performance of the systems we offer to users.

If we discover a better combination of cell types that improves learning, that gets integrated into our products.

If we develop a more effective way to stimulate the cells, that gets built into our API, so users can access it directly through something as simple as a Python function call.

Michael Frazis

Let’s come back to the device itself. How does this actually fit into the system?

Dr. Hon Weng Chong

The chip sits inside a neural chamber.

You cannot easily see it, but there are electrodes that connect to the outer edges of the chip and establish electrical contact. That is how we read and write activity.

You can think of it as analogous to a neural interface.

At the back of the unit, there is a base compute system. It runs on a quad core ARM processor along with an FPGA module, which we use extensively to modulate signals.

Michael Frazis

So you have had to build the entire system. You are converting biological electrical signals into something a computer can process and put online.

Dr. Hon Weng Chong

Exactly.

We had to do a significant amount of chromatography work to determine the right concentrations for everything.

We also needed to design filtration systems that could retain large molecular weight growth factors while still allowing smaller molecules to pass through.

For example, you want glucose to freely equilibrate across the membrane, but you need to trap larger proteins.

To achieve that, we sourced filters with an 80 kilodalton cutoff. Albumin, which is around 150 kilodaltons, is one of the larger proteins we want to retain. It is similar to what you find in egg white, which is mostly albumin.

At the same time, we need to remove waste products such as ammonia, which is a byproduct of cellular metabolism. CO2 is also generated.

Ammonia is particularly important because it affects pH levels. Maintaining a very stable pH is critical, as even small changes can damage or kill neurons.

This is true in humans as well. Conditions like alkalosis and acidosis disrupt potassium transport across cell membranes. Since neurons are highly electrically active, they rely heavily on potassium ions moving in and out of the cell.

So, the system manages gas inputs including oxygen, CO2, and nitrogen, while maintaining a tightly controlled biochemical environment.

Dr. Hon Weng Chong

We also have inputs and outputs for the media, along with USB-C and gigabit ethernet connections to extract the data.

Michael Frazis

So that is how you get the actual data out?

Dr. Hon Weng Chong

Exactly.

Michael Frazis

That is some serious engineering.

Dr. Hon Weng Chong

Yes. We can actually open up some of the units and show you the electronics behind them.

Michael Frazis

Have you built a team to handle all of this?

Dr. Hon Weng Chong

We have been very fortunate to build a strong team. It is highly multidisciplinary and very forward thinking.

Bringing this together has been a challenge in itself. You need people who can work across biology, engineering, and software, and who are willing to tackle something that has not really existed before.

We have sourced talent in a number of ways, including LinkedIn, job postings, and referrals.

Australia has excellent talent. The key is creating opportunities for people to apply it.

Michael Frazis

It seems like the kind of project that would attract very smart people. It is so novel.

Dr. Hon Weng Chong

Yes, the novelty definitely helps.

Michael Frazis

So when someone is playing Doom on the other side of the world through an API, what is actually happening inside the system?

Dr. Hon Weng Chong

The Doom code is available publicly, and users can access the Cortical Cloud platform to run it themselves.

What is interesting is that the system is not purely biological. It operates as a hybrid.

Sean, who developed the Doom implementation, designed it as a tandem system. There is a reinforcement learning model, likely PPO, running alongside the neurons.

He also built a bridge between the digital and biological systems. A convolutional neural network takes the pixel data from the game and converts it into spike trains that the neurons can understand.

These spike trains are then sent into the biological system as electrical signals. The neurons process that information, and their responses are read back out through the same interface.

Dr. Hon Weng Chong

The signals get converted from spike trains back into controls like WASD and the space bar.

Michael Frazis

That is incredible.

Dr. Hon Weng Chong

There is still some machine learning involved in that process.

But what is important is that if you remove the biological component, the system stops working. That is our control.

If it were purely a machine learning system, it would continue to function without the cells. But when we remove the neurons, it no longer plays.

We were very careful about this. When we first saw the results, we asked Sean to run additional controls.

When we fed the system fake spike data, it did not work. That confirmed that the biological component is essential to the process.

There was a lot of commentary online about this. Some people described it as human neurons being placed into the world of Doom and immediately confronted by monsters.

Michael Frazis

Which sounds quite terrifying.

Dr. Hon Weng Chong

It does, but that is not really what is happening.

You could replace Doom with something completely different, like a simple or even playful environment, and the system would behave in the same way.

The neurons are not interpreting meaning or context. They are simply responding to structured inputs.

The idea of demons or monsters is a human interpretation. Without that cultural context, it is just patterns and signals.

Michael Frazis

That makes sense.

I am curious about your thoughts on consciousness and emotion.

We are talking about biological systems using human neurons. At what level of organisation or scale do you think consciousness emerges?

Dr. Hon Weng Chong

That is a very deep philosophical question, and it depends heavily on how you define consciousness.

It is what David Chalmers refers to as the hard problem of consciousness.

At a fundamental level, the only thing you can truly be certain of is your own awareness. You cannot definitively prove that anyone else is conscious.

That makes the question extremely difficult to answer.

There are, however, proxies that people try to use.

The challenge is that the field itself does not even have a universally agreed definition. What is consciousness? How is it different from sentience? Are they the same thing?

Even the terminology is still debated.

Michael Frazis

What is your definition?

Dr. Hon Weng Chong

One perspective I find useful comes from a 2022 paper, which defines sentience as the ability of a system to respond to stimuli.

That is a relatively simple definition.

For example, a single cell organism like a paramecium can respond to its environment. If you disturb it, it moves away. If you introduce nutrients, it moves toward them.

But that does not necessarily mean it is conscious.

It becomes very difficult to assign levels of consciousness to such systems.

While they may exhibit basic responsiveness, I would not consider that to be consciousness.

From there, you can think about a spectrum of increasingly complex organisms, each with more sophisticated behaviours and capabilities.

Dr. Hon Weng Chong

Even across species, we tend to assign different levels of consciousness based on cultural norms.

For example, most people would not eat a dog because they perceive it as more conscious or emotionally complex. But if you think about it, a dog is likely just as intelligent or conscious as a pig.

Yet people are generally comfortable eating pigs because they are seen as a lower life form.

So the question of consciousness becomes deeply philosophical, and in many ways, cultural or even religious.

When it comes to our system, I do not believe it reaches that level. We are working with around 200,000 neurons, without the kind of structure you would see in a full brain.

You can think of it as a very simplified system, more like a flattened network rather than a fully organised brain.

Michael Frazis

So even if it has a high number of parameters, it may still lack the necessary structure?

Dr. Hon Weng Chong

Exactly. Structure likely plays a critical role.

For example, cortical columns and the three dimensional organisation of the neocortex may be essential for higher order processing.

Our system is closer to a two dimensional or slightly layered structure. There are variations in density, but it is not comparable to a full three dimensional brain like an organoid.

Michael Frazis

If you think about it from first principles, a single neuron is clearly not conscious. Two neurons are not conscious. So where does that transition happen?

Dr. Hon Weng Chong

That is the key question, and we do not have a clear answer.

Part of the challenge is that we do not yet have the tools to fully investigate it. Much of what we know comes from observing what happens when systems break down.

For example, in neurology and psychiatry, we study conditions where brain function is impaired.

One well known example is blindsight.

These individuals are clinically blind. If you show them an eye chart, they cannot read anything.

But if they are walking and encounter an obstacle, they will avoid it. If you throw a ball at them, they may catch it.

However, they have no conscious awareness of doing so.

So they are able to respond to stimuli, but without a conscious experience.

That highlights a distinction between basic responsiveness and conscious awareness.

It also shows how little we truly understand about how consciousness emerges.

Michael Frazis

I have heard of similar ideas with people who are blind being able to sense their surroundings through sound, almost like a form of sonar.

Dr. Hon Weng Chong

Yes, that is well documented.

In individuals with little or no vision, the brain often repurposes the visual cortex to process auditory information. In a sense, they can “see” through sound.

Some people are even able to navigate spaces by interpreting echoes, similar to how bats use sonar.

This highlights the adaptability of the brain.

Michael Frazis

It does seem like an evolutionary advantage. Dr. Hon Weng Chong:

Exactly. It also speaks to neuroplasticity.

The brain is constantly seeking the most useful information available.

If visual input is limited, it will rely more heavily on other senses, such as sound, to build an understanding of the environment.

Michael Frazis

You have worked with systems of around 800,000 neurons. How many neurons are there in the human brain?

Dr. Hon Weng Chong

Roughly tens of billions. It is around 50 to 60 billion neurons, with an even larger number of synaptic connections.

Michael Frazis

What do you think would happen if you scaled your system up to that level? Would it be capable of more than playing Doom?

Dr. Hon Weng Chong

Possibly. It is difficult to say.

That is part of the reason we built the system in a modular way, with a cloud component. One of the next steps is to connect multiple units together.

Since each system has networking capability, we can transmit information between them and begin to create more complex, structured networks.

This is an active area of research for us. Once we develop a robust framework, we plan to release it as part of our API so users can scale and combine multiple units.

That could enable more advanced tasks, potentially even collaborative or multi agent systems.

Michael Frazis

So right now, the neurons are controlling a relatively simple input system. A few directional controls and an action button.

Could you expand that to something like text generation?

Dr. Hon Weng Chong

It is already being explored.

One of our early access users connected a language model to the system, where neuronal activity influenced word selection.

Someone else connected it to a trading system. It produced some interesting behaviour, although not necessarily profitable.

There is a lot of experimentation happening at the moment.

Michael Frazis

So in theory, you could feed in text and allow the system to learn patterns over time?

Dr. Hon Weng Chong

Potentially. It is still an open question.

We are only beginning to explore what these systems can do when you increase the number of outputs and the complexity of the task.

Michael Frazis

If they start producing words, that feels very different to something like an octopus or another simple system.

Dr. Hon Weng Chong

Exactly. And this is really the opportunity to experiment.

That is why having a platform with minimal setup is so important. These ideas no longer have to remain theoretical discussions. Instead of saying, “Wouldn’t it be interesting if this worked,” you can actually try it.

Someone could run an experiment, spend a small amount of money, and potentially discover something meaningful.

Michael Frazis

That seems like a core part of what you are building. This is a business as well, so what is the model?

Dr. Hon Weng Chong

The model is infrastructure.

If you look at the current AI ecosystem, researchers and software developers can focus on building models because the hardware layer is already solved by companies like Nvidia and TSMC.

In this field, that infrastructure does not yet exist.

Our goal is to build that foundational layer and enable others to move faster.

Michael Frazis

Some people might ask why you would open this up rather than keep it proprietary.

Dr. Hon Weng Chong

If you look at the history of AI, breakthroughs often come from unexpected places.

For example, AlexNet fundamentally changed the field. It was not something that could have been easily predicted.

That is why it is important to build an open ecosystem. The most valuable ideas may come from people you would not expect.

Collaboration and experimentation are key to accelerating progress.

Michael Frazis

People can access this system today if they have an idea they want to test?

Dr. Hon Weng Chong

Yes.

From a commercial perspective, we offer multiple options.

We sell the hardware units to research labs for around 35,000 dollars. These are typically institutions that already have the capability to culture and maintain cells, and they are using the system to explore deeper scientific questions.

For users without that biological infrastructure, the cloud model is the more accessible option.

Michael Frazis

Because even if you owned the hardware, you would still need the surrounding systems to keep the cells alive.

Dr. Hon Weng Chong

Exactly. You need the full biological setup to maintain and grow the neurons.

Michael Frazis

Why use human neurons rather than something like animal neurons?

Dr. Hon Weng Chong

The main reason is the depth of existing research.

There is far more literature and funding behind human neuronal systems, particularly from the biomedical and clinical research space.

In science, we are building on existing knowledge, so it makes sense to leverage what is already well understood.

There are other possibilities. For example, we have experimented with different types of neurons, and there is interest in more unusual systems.

But areas like that tend to lack funding and commercial applications, so progress is slower.

By focusing on human neurons, we can move faster by building on a much larger base of existing research.

Michael Frazis

What is next? Is there another version of this system coming? Is there a product roadmap you can share?

Dr. Hon Weng Chong

Yes, we do have a roadmap.

We are following a development cycle similar to what is often used in hardware, sometimes referred to as a tick tock model.

This means alternating between major upgrades and incremental improvements.

The current version is the baseline. The next iteration will be a more advanced version, which we refer to as the CR1 Pro.

That will include more electrodes and greater processing capability, allowing it to handle more complex data and workloads.

Further ahead, we are working on the next generation system. While I cannot share too many details, it will likely involve miniaturisation, new ways of interfacing with cells, and improved methods of packaging biological systems.

Michael Frazis

Are there any interesting things about neurons that people might not be aware of?

Dr. Hon Weng Chong

One relatively recent discovery is that not all mammalian neurons behave the same way.

For a long time, it was assumed that neurons across species were broadly similar.

However, research has shown that human neurons tend to be larger than those in animals like mice, while having a similar number of ion channels.

This suggests that human neurons may be able to process more information due to their size and capacity to maintain different electrical states.

Michael Frazis

What do you mean by holding more electrical states?

Dr. Hon Weng Chong

Different parts of a neuron can hold different electrical charges.

When a signal is triggered, it propagates along the neuron in a coordinated wave, similar to a chain reaction. If a neuron can maintain more nuanced electrical states before firing, it may be able to represent more complex information. That effectively increases the dimensionality of the system.

Michael Frazis

So the parameter space could be much larger than what we see in traditional models?

Dr. Hon Weng Chong

Exactly. Much larger.

Another important point is that biological systems do not rely on backpropagation in the same way as traditional machine learning systems. That is one reason they are so energy efficient. In contrast, systems like GPUs consume large amounts of energy during training, partly due to fundamental physical limits related to information processing.

Dr. Hon Weng Chong

One of the key ideas here comes from the principle that most of the energy used in computation is actually spent when you erase a bit.

Rest of interview here.